The critical agenda for the human race is not only its own future, but the future of all higher life forms on Earth and the far, distant future of intelligence and higher consciousness.

This essay was initially written in 2016.

What is the future of humanity? And, is there anything special about humanity that makes its future of special importance (say, as opposed to artificial intelligence?)

Are humans good for anything at all? Do they have any essential value — or are they just a blight upon the Earth?

If so, how many should there be? Might it be better to have ten billion or is ten million quite enough?What should humanity's purpose and goals properly be (to justify its voracious consumption?) What should human civilization strive to achieve?

Might the future in, say, a century, be better off without humanity entirely or with a radically reduced human population?

And, now some answers — crucially, we must assure that our biosphere and the highest aspects of our civilization survive. Why? Because the loss of our biosphere and the complete loss of humanity would close off an incomparably bright, distant future of immeasurable promise and beauty. That future has been in the making on Earth for billions of years and is now threatened by us.

The Doomsday Clock of the Bulletin of Atomic Scientists ticks at 90 secons before midnight (in 2023.)

Also, see this brief Ted Talk by Sir Martin Rees (Britain's Astronomer Royal); it is essential viewing. (Lord Rees warns of the imminent 50:50 likelihood of humanity's complete destruction.)

Why would the complete loss of humanity be such an incomparable tragedy? Because we have a great capacity for wisdom and good, which, if carefully directed, may pave the way to an ultra long lasting civilization of transcendent worth.

The principal goal of humanity must be the survival and expansion of wisdom. With the term wisdom I mean to encompass all of wise thought and action, the main goal of which is the elaboration of truth, beauty, goodness, and love.

Truth is what science strives to elaborate: an accurate description of the world (as found in undisturbed nature but also in our engineered artifacts.)

Beauty is to be found in undisturbed nature but also in our artistic works.

Goodness: compassion, fairness, altruism, courage, kindness, self-control, transcendence.

Love motivates our goodness, which encompasses all our service to our families, our communities, the family of man, and to our entire biosphere. (Love also motivates our playfulness: our enjoyment, our exploration, and fun.)

But - in sharp contrast to the above - humanity has a massive evil aspect. Humans have wrought great destruction upon one another as well as upon planet Earth. Our wars have killed millions. Our nuclear arsenal is poised to annihilate humanity — eg, see this TED talk by atmospheric scientist Brian Toon.

Our animal nature, evolved over billions of years, cuts both ways. Yes, it underlies our pursuit of truth, beauty, goodness, and love. But it has also propelled our unchecked reproduction, right to the edge of the Malthusian Cliff.

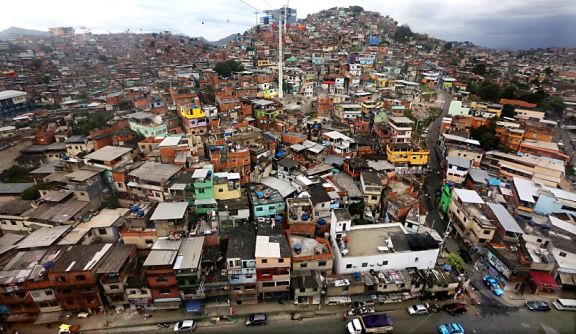

Our expanding population of 7.75 billion (in 2016) has required the conversion of all Earth's resources for our feedstock, our water, our air, our energy, and our machines. We are so self-deluded that our massive verminous nature is not even viewed as a problem, despite the pervasiveness of our internet, tv, and movies. Despite our similarity to out-of-control rats and locusts the problem is not even mentioned in our political discourse. The explosive expansion of humanity, somehow, has come to be viewed as an undisputed good. What insanity!

So, might a massive die-back of humanity assist the survival of our biosphere? Yes, it would, but it would also be an unspeakable tragedy. No responsible leader hopes for nor advocates this.

My hope has always been for aggressive population control via expanded international programs for family planning and birth control and global educational outreach. It's a tragic mistake that organized religions have aligned against such efforts. But part of the blame rests upon governments and even academic science for allowing population control to become a back-burner issue for reasons of political correctness.

So, how many humans does the Earth need? My answer is much fewer, but fewer achieved humanely.

For years I wondered why the Bill and Melinda Gates Foundation (forty four billion dollars) wasn't tackling birth control. Couldn't they see that that's one of the main problems creating poverty? Well, they woke up! In this must-see TED Talk, Melinda Gates presents the case for birth control. (If you're a woman raising several small children living in poverty, you desperately want birth control.)

Like the "few and the proud" of the US Marine Corps, the human race has a weighty responsibility: steering our civilization through the bottleneck of potential destruction to the biosphere. This is a bottleneck that could easily result in our complete destruction.

Many of my associates contemplate the question of intelligent life in the Universe. With billions of galaxies full of billions of stars, where are the other intelligent beings? One common guess is that most of those ET civilizations have blown themselves up. They didn't make it. That could easily be our fate. And, as Sir Martin Rees says in his TED Talk, it would be incomparably tragic — far worse than simply a subtotal dieback.

If we are entirely destroyed there would be no more truth, beauty, nor goodness: no one to read our articles, no one to watch our shows, no one to design our products, no one to propagate consciousness, intelligence, and goodness throughout the universe, that is, no one except perhaps the AIs (the artificial intelligences and their robot peripherals.)

Can AIs actually replace humans as sources of wisdom, truth, beauty, and goodness? And, if they can replace us, should they? Might they be better at self-control, less damaging to the environment, use less energy, be more efficient, be more productive, and have greater long-term potential for, say, modifiability, and survivability in extreme conditions including outer space?

First, can AIs replace us, say, as sources of love, goodness, compassion, and wisdom: aspects of us that seem to be uniquely human (or, at least, mammalian (or multicellular)?)

The fact is that AI which is wise, good, spiritual, and compassionate does not exist — and the prospect for that is a long way off (and even for the reverse (Terminator-style, intentionally malicious AI.))

That is not to say that most human beings have great wisdom or capacity for goodness. (Some do, some of the time.) Here, we're talking about the best of humanity as in our historic exemplars: eg, Einstein or Gandhi or Nelson Mandela.

Might AIs outcompete humanity, though, as sources of scientific truth, creativity, and the engineering of new products and other artifacts. That is less clear.

There are already early examples of AI scientists that make use of large databases to make discoveries. (My own PhD thesis work at Stanford on the RX Project forty years ago was an early example.) Those massive databases are now ubiquitous, and big data is a focus of industry and academia. There are even robotic researchers that design and carry out biologic experiments.

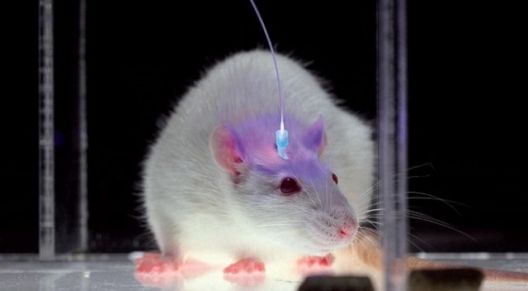

However, even here (except for high-throughput screening) the vast majority of research involves the creative application by human investigators of brand new lab tools. (I hear cutting edge research daily at Stanford as stunning applications, eg of optogenetics, to read out the inner workings of intact neural circuits. But the conception and execution of this research is as spectacularly difficult as any enterprise that brings forth the utmost in human ingenuity. (That will not be replaced by AIs anytime soon, despite current misleading headlines.)

However, over several decades the principles elaborated by neurobiologists will be incorporated into state of the art AI. That research is driven by the push of new technology, and the pull of increasing consumer demand. As markets for that AI expand, more dollars will be drawn into those labs (like those of Google, Apple, Facebook, IBM, and Microsoft.) The largest near-term market will be the one created by driverless cars (every car company is developing these.)

Returning to our starting point, are uniquely human qualities a benefit at least to us if not to planet Earth? Yes, at least for the next several decades they may be. Our strategy must be to minimize the damage we cause and to maximize our efficiency at elaborating wisdom and goodness.

But, in the long run (say after 2100) the future is far less certain. While human efficiency has been honed by millennia of evolution, the AIs have obvious advantages that may come to prevail (if we and they survive that long.) Chief among them is their rapidity of evolution. AIs and other artifacts (unlike humans) evolve at the speed of design and at the speed of the mind, initially ours, and later theirs.

AIs have several pivotal advantages over humans. 1) They can clone themselves by the millions in an instant, 2) their memories are perfect (nothing is forgotten), 3) once something is learned (a fact, a principle, a method, a procedure) it can be instantly shared with all the others, 4) there is no death, no fatigue, no distraction, no emotional baggage, 5) they "think" at the speed of light. These advantages may cause them to prevail in the long run (as they are currently on Mars.)

When they can autonomously design themselves (this is way in the future,) their evolution will be entirely in their minds and hands, and humans may become as marginalized as present day chimpanzees. We will be irrelevant. (That does not imply that our future will be bleak but simply that the torch of progress will've been passed. Our numbers may be entirely controlled by the AIs, and the fate of planet Earth will be in their hands. (My view is that that's apt to be a good thing. Wise AI-based counsel may be as good at steering civilization as it is at controlling driverless cars. Humans right now are steering the car over a cliff.) (But, for an opposing view, see Harvard psychologist Steve Pinker's 2018 book, Enlightenment Now — seemingly pollyannish but emphasizing that so far we've survived the nuclear age and coped with other existential threats.)

In 1965 I J Good was getting at that sentiment in this famous quote:

Let an ultraintelligent machine be defined as a machine that can far surpass all the intellectual activities of any man however clever.

Since the design of machines is one of these intellectual activities, an ultraintelligent machine could design even better machines;

there would then unquestionably be an 'intelligence explosion,' and the intelligence of man would be left far behind.Thus the first ultraintelligent machine is the last invention that man need ever make.

But note that I J Good left out wisdom, beauty, goodness, and compassion. It should be obvious that the AIs must also surpass us at those before we voluntarily turn over the reins of civilization to them. This may well be even beyond the era when they have mastered the entire contents of the internet and the real-time feed from trillions of sensors around planet Earth.

Also see these related essays

Pause AGI Research? Forget it!

Realistic AI? Not Possible Now; New Videos of Neurons say "Forget it!"

Let the AIs, not us, formulate a billion-year plan!

Self-Driving Cars: The Future of Autonomy in the 2020s

This essay was initially written in 2016. I welcome substantive, emailed comments on all my essays. With your permission, excerpts may be posted.